Dynamic programming is used to solve complex problems. Complex problems are broken into subproblems. Each stage of dynamic programming is a decision making process. At each stage a decision is taken that promotes optimization techniques for upcoming stages.

To carry-out Dynamic Programming following key functional working domain areas has to be considered:

- Problem set

- Methods used to define problem set

- Recursion

- Terminating condition

- Overlapping Subproblems

- Optimization technique

Problem Set should be concise. More concise the problem set is, more time and space efficiency is achieved. Problem set is generated using conditions, Conditions are used to filter problem set elements. Filter conditions are defined as per the requirement of the problem.

In Dynamic programming the solution formula is applied to the problem set which gives results. The solution formula is again applied to the result obtained and this process continues until the dynamic programming objective is obtained. Each time the solution formula is applied it must give the optimal value.

A problem can be treated as a dynamic programming problem if and only if the solution set itself represents the problem and contains conditions that lead to problem termination. It depends on the experience of the analyst that it identifies the problem as the dynamic programming problem and defines tight boundary conditions.

Dynamic programming is used in Mathematics, Computer programming, Bioinformatics etc.,

Following methods are often used to generate a problem set of dynamic programming:

- Combinatorial Method

- Generating functions

- Transfer matrices

- Recurrence relation

- Graphical representation

Combinatorial method is based on binomial expression. Binomial expression is used to find possible filter conditions that may be used to form a problem set.

Generating functions from sequences. Sequences decide the condition and complexity of the problem. Using generating function recurrence of sequence is investigated based on which condition is decided to build the problem set.

Transfer matrices are used to develop relation matrices based on qualifying conditions. Conditions on which matrices are developed are pre-defined. If the problem is known at the very beginning then an optimal problem set can be obtained using simple matrix operations.

Recurrent relations define a sequence based on a predefined condition. In recurrence relation, next term is a function output of the previous terms. A problem may be defined using recurrence relation.

Graphical representation is used when the problem set consists of numerical values. A relation is developed among numerical values using graphs and this graph is used to form a problem set. Graphs depict relationships among two variables which themselves are constituent elements of the problem set. Relationships among variables are depicted using algebraic expressions.

Recursion in Dynamic Programming is used to define a problem set, a problem set is generated using conditions. Conditions to generate a problem set should be such that each element of the problem set is defined in terms of other elements of the problem set. When this is achieved it is termed as a recursive process. This recursive process is defined by recurrence relation. Using recurrence relation problem sets can be generated with minimum initial values and rules. In recursion logical operations should be performed in such a way that it does not lead to infinite execution of the function.

The most critical property of the problem set in dynamic programming is that it should be recursive. Each recursive set is a finite set and mathematical operations such as union and intersection on recursive sets are also recursive.

Terminating Conditions in recursive functions is important, if it is not executed it leads to infinite execution of recursive functions. Terminating conditions must be formulated to make minimum recursive calls. The minimum the recursive call the better is the time complexity of the dynamic programming.

Overlapping Subproblems in dynamic programming are recursive. Intersection of recursive sets is not null. To improve the efficiency of dynamic programming, execution of the intersection of problem subsets must be killed. This intersection of problem subsets is called Overlapping subproblems.

Overlapping of subproblems is a desirable characteristic of dynamic programming but repeated execution of overlapping subproblems is undesirable. To overcome this, storing results of subproblems is preferred in such a way that when the same subproblem is requested to execute again its saved results are used. This mechanism improves time complexity as well as space complexity.

Optimization Techniques in dynamic programming improves time and space complexity. Dynamic programming algorithms work for optimal solutions. Optimal solution is obtained using heuristics defined in the problem set, these heuristics depend on objectives to be achieved. In addition to this, optimization in terms of time and space complexity is achieved by using efficient data structures having well defined, efficient and effective operations.

Turning Machine

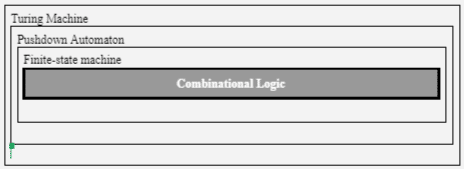

A Turing machine is an abstraction that operates on a set of rules. Turning machine examines input and its present state to make a decision to execute the next instruction or terminate. The Turing machine is the backbone of computer programming languages. Figure 1 gives insight into Dynamic Programming. In Figure 1 Combinational logic is the core Turing Machine and this combinational logic is used in dynamic programming to build recursive problem sets.

Divide-and-Conquer Algorithms

Divide-and-conquer is a top-down programming technique. Divide-and-conquer follows a recursive approach to solve problems. In Divide-and-conquer, the main problem is broken into sub-problems and these sub-problems are solved, results obtained are combined to get the solution of the main problem.

Divide-and-conquer algorithm depends on four factors –

- Splitting factor

- Stability

- Dividing problem set into subsets using Data Value

- Serialization

Splitting Factor decides the number of subsets that can be built from the problem set. A divide-and-conquer algorithm must be split into two or more subsets. There exists a Divide-and-conquer algorithm which has a splitting factor of 4 or even 7.

Stability in divide-and-conquer is attained when a problem set is divided into two equal size subsets. Mergesort and Binary Tree algorithms are examples of stable divide-and-conquer algorithms. A stable Divide-and-Conquer has better execution efficiency than an unbalanced Divide-and-Conquer.

If the problem set is not able to be divided into two equal sized subsets then it has to be made stable using a stability factor. Stability factor can be a cost function or any other function, that may be associated with the elements of the problem set.

Dividing a problem set into subsets using a Data Value in the Divide-and-Conquer algorithm can be done using a particular value from the problem set; when this is done, the algorithm may result in unbalanced subsets of the main problem set. This can be overcome by using median divide resulting in a stable Divide-and-Conquer algorithm.

Serialization is the execution pattern followed in Divide-and-Conquer algorithm. Serialization gives birth to parallelization. Using serialization results of one subproblem may be fed as input to other subproblems. Ordered serialized divide-and-conquer algorithm eliminates parallel execution of subtasks.

To attain parallel execution of subtasks all conditions that make execution of subproblem serial must be removed.

Data Mining

There exists different types of operational processes – Customer Support, Marketing, Sales and Product Delivery. These operations generate data. When this data is processed it reveals patterns that can be used to further improve the operational process.

Data processing complexities increase with the increase in the volume of data. To process the voluminous data, the Divide-and-Conquer approach often becomes the choice of data engineers as it splits data into subparts and reduces processing overheads.

Difference between Dynamic Programming and Divide-and-Conquer

Dynamic Programming | Divide-and-Conquer |

|

|

|

|

|

|

|

|

Leave a Reply